How I used “Vibe Coding” and 25 years of experience to tame a liquid-cooled supercomputer in two weeks.

How I used “Vibe Coding” and 25 years of experience to tame a liquid-cooled supercomputer in two weeks.

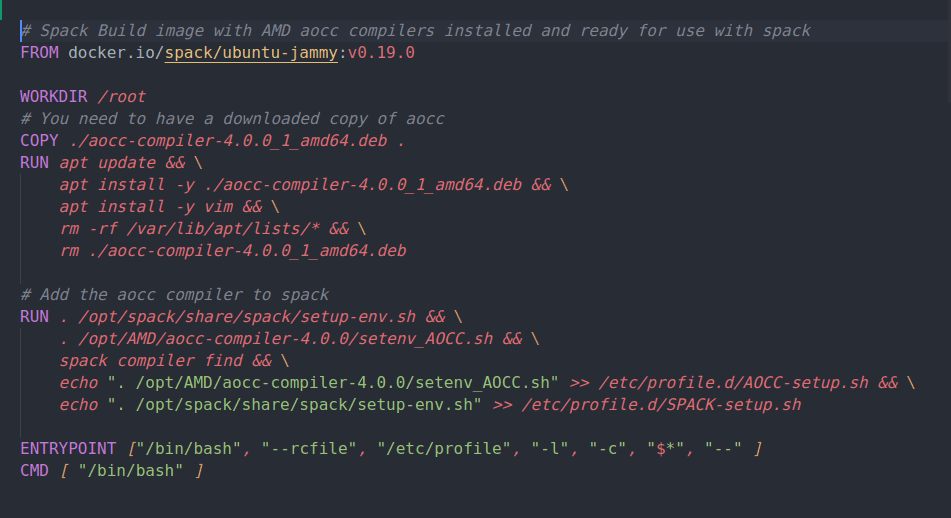

AMD has recently released version 4.0 of their AOCC compiler which includes support for AVX512 on the Zen4 architecture. This post details building a Docker image containing the Spack package manager/build system together with AMD AOCCv4.0.0 compilers. This will be used as the build image for multi-stage Dockerfiles that will be used to compile scientific applications and benchmarks with targeted Zen3/4 optimizations. It is the first step in that process.

This post presents scientific application performance testing on the new AMD Ryzen 7950X. I am impressed! Seven applications that are heavy parallel numerical compute workloads were tested. The 7950X outperformed the Ryzen 5950X by as much as 25-40%. For some of the applications it provided nearly 50% of the performance of the much larger and more expensive Threadripper Pro 5995WX 64-core processor. That’s remarkable for a $700 CPU! The Ryzen 7950X is not in the same platform class as the Tr Pro but it is a respectable, budget friendly, numerical computing processor.

We’ve been curious about the performance of WSL for scientific applications and decided to do a few relevant benchmarks. This is also a teaser for some hardware-specific optimized application containerization that I’ve been working on!

NVIDIA Enroot has a unique feature that will let you easily create an executable, self-contained, single-file package with a container image AND the runtime to start it up! This allows creation of a container package that will run itself on a system with or without Enroot installed on it! “Enroot Bundles”.

Enroot is a simple and modern way to run “docker” or OCI containers. It provides an unprivileged user “sandbox” that integrates easily with a “normal” end user workflow. I like it for running development environments and especially for running NVIDIA NGC containers. In this post I’ll go through steps for installing enroot and some simple usage examples including running NVIDIA NGC containers.

It’s time for a “Docker with NVIDIA GPU support” update. This post will guide you through a useful Workstation setup (including User-name-spaces and performance tuning) with the new versions of Docker and the NVIDIA GPU container toolkit.

Docker is a great Workstation tool. It is mostly used for command-line application or servers but, … What if you want to run an application in a container, AND, use an X Window GUI with it? What if you are doing development work with CUDA and are including OpenGL graphic visualization along with it? You CAN do that!

Being able to get Docker and the NVIDIA-Docker runtime working on Ubuntu 19.04 makes this new and (currently) mostly unsupported Linux distribution a lot more useful. In this post I’ll go through the steps that I used to get everything working nicely.

Ubuntu 19.04 will be released soon so I decided to see if CUDA 10.1 could be installed on it. Yes, it can and it seems to work fine. In this post I walk through the install and show that docker and nvidia-docker also work. I ran TensorFlow 2.0- alpha on Ubuntu 19.04 beta.