GTC this year was intense. It was the first time I’ve sat in a “rock star” keynote like that, and the energy was palpable. But there was also a weird tension in the air. I, along with many others, was surprised by how hard Jensen went in on OpenClaw and DGX G10s (Spark). The media, predictably, was rife with mocking memes that called Jensen a “shovel salesman” selling shovels to a gold-rush crowd.

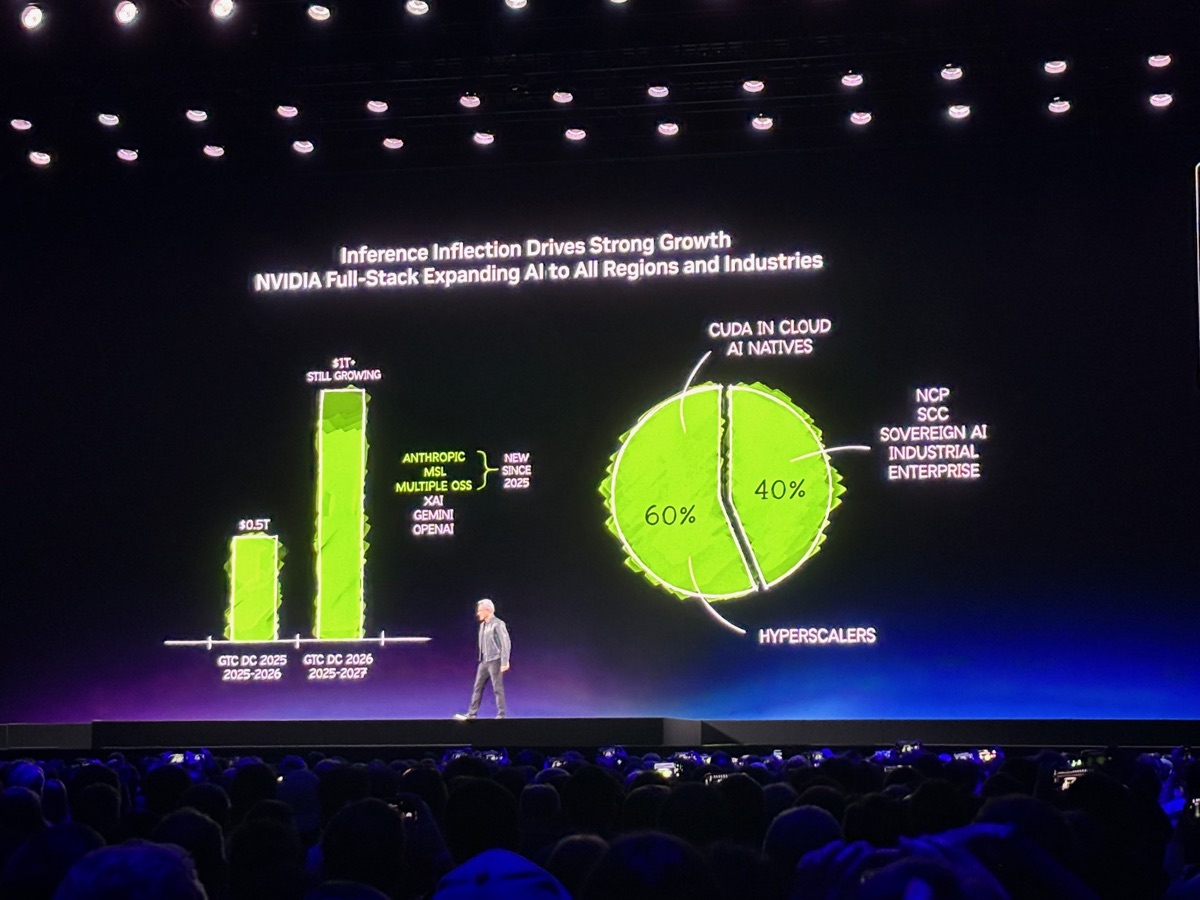

There is a massive debate raging right now: Is AI a bubble? Will the hyperscalers and their “Token AI Factories” just speed up climate change and leave a trail of wasted capital?

Among the most controversial takes at the conference was the idea that OpenClaw “was the new compute layer” – drawing direct comparisons to the emergence of Linux. So, I have to ask: Is Jensen just a shovel salesman? Or are we looking at the new bedrock?

I recently sat down for lunch with an old friend, Drew, to talk shop. We’ve both been full-stack developers for a long time, and we meet up to keep tabs on the AI winds. Drew took the skeptic’s position: He sees a bubble. He sees no sign that anyone will actually use all this massive compute, and he thinks the NVIDIA hype will eventually break under its own weight.

My response was simple: I can’t get enough tokens. Can you?

I am an early adopter by nature. I was there when dial-up gave way to the World Wide Web. I was there when GPUs fundamentally changed gaming, and when Steve Jobs pulled the iPhone out of his pocket (back when “touch interfaces” meant fighting with a plastic stylus).

It surprised no one in my circle that I jumped headfirst into AI. Over the last three years, I’ve moved from traditional regression and prediction modeling to deep generative workflows. It’s been a hell of a journey. I remember the skepticism I felt when GPT-3 could barely string a coherent sentence together, let alone write code. Now? I have 4-5 agents working on my projects concurrently. I spend my day context-switching between them, managing their focus as they augment my own.

This is where the “bubble” argument falls apart for me. The demand is a capacity issue.

Early on, I explored the world of unsubsidized model costs. I remember when token costs could spike to $1,000 a month just for Bedrock AWS Sonnet models. I needed more, and I needed it cheaper. I jumped to Gemini, then to specialized models. Today, my workflow is a constant dance: I’m rocking Nemotron on localized hardware for privacy and speed, then switching to Gemini when I need a massive context window.

I still can’t get enough tokens.

This is exactly what Jensen was talking about regarding the “500K engineer.” He wasn’t talking about people typing prompts into a chat box; he was talking about engineers using AI to augment their output 10x. When you are running agentic workflows, you aren’t just “using an app.” You are actually consuming massive, raw computational power. It is token generation all the way down.

The world is in a strange paradox. On one side, you have the public “hating” AI or fearing its impact. On the other, you have the actual practitioners who are building things and demanding more tokens, more speed, and more privacy. I see this conflict as a fundamental failure of the AI community. The missing bridge is the quality of the output people are creating.

Over the last two decades, most of what we’ve seen on screens has been human-created content, amplified by digital tools. Think of the evolution: from overhead transparencies and color-gels to Photoshop and Illustrator, from manual typing to word processors, from 2D animation to full 3D modeling and rendering. All of these new tools still required skill to use. Arguably, AI is no different – but it’s a tool that almost anyone can use, so we have seen expert spaces flooded with slop. I would argue that artists, coders, and digital creators using AI to help their creative process are not creating slop: they are applying their expertise to a new medium to create something awesome.

I see this shift happening in real-time. We are moving away from the “Cloud-Only” era. As the cost of running these models locally drops, and the demand for privacy and low latency rises, the bottleneck is shifting from the model to the machine.

When you actually calculate Total Cost of Ownership (TCO), the math shifts dramatically depending on the scale of the models you are running. Token APIs seem cheap for simple inference, but the moment you want to run or fine-tune high-parameter, open-source models like unquantized 70B+ models, you hit a hard VRAM ceiling. A dual NVIDIA RTX PRO™ 6000 Blackwell setup gives you nearly 200GB of unified memory, which is exactly what you need to run massive LLMs locally without compromise. Renting that kind of VRAM in the cloud (like an AWS p4d.24xlarge) runs around $32 an hour. You’re burning through over $5,000 a week in pure rental fees for dedicated access. Compare that to investing in a top-tier local workstation from a builder like Puget Systems. It pays for itself in less than two months of heavy utilization. Once you hit that crossover point, your high-VRAM compute is essentially free, and your team can experiment without a ticking meter!

I was talking about this with my partner, Monica, who is scaling AI skills at T-Mobile. We weren’t debating AI ethics – we were discussing how token usage is now the direct cost of scaling human capacity. When your team’s output is fundamentally tied to their computational budget, providing massive, localized compute becomes the only sustainable way to actually multiply your workforce.

In the end, Drew agreed with me on one thing: he can’t get enough tokens either. But for me, the hunger is specifically for local tokens.

The “bubble” isn’t going to burst because the demand isn’t going away, but it’s going to be defined by who can actually provide the muscle to run it. If you want to move from “playing with prompts” to running serious, private, agentic workflows, you quickly realize that the consumer-grade hardware market isn’t built for this. You can’t run a local LLM powerhouse on a gaming laptop. You need serious iron, specifically the kind of optimized, high-performance workstations that can handle the heat and the hunger of a Blackwell or a RTX 5090 without breaking a sweat.

So, is AI just a shovel? Maybe. But if you’re planning to dig a canyon, you’re going to want a shovel that’s built to last.