In-camera visual effects (ICFVX) stages are evolving quickly. LED volumes are growing in size and resolution, camera tracking systems are becoming more precise, and real-time rendering pipelines are increasingly interconnected. As complexity increases, so does the need for standardization.

This is where SMPTE 2110 enters the conversation. Originally developed for broadcast environments, SMPTE 2110 defines a standardized method for transporting uncompressed video, audio, and metadata over IP networks. While it was not designed specifically for virtual production, many of the problems it solves — such as precise timing, flexible routing, and scalability — closely align with the challenges faced by modern ICVFX stages.

What is SMPTE 2110

At a high level, SMPTE 2110 is a set of standards that define how professional media is transported over IP networks rather than traditional SDI cabling. It was created by the Society of Motion Picture & Television Engineers in 2017, and formally is called SMPTE ST 2110. There are additional numeric designations for various parts of this standard suite, but in general conversation those and the ST portion of the name are often left out — resulting in just SMPTE 2110 as the shorthand, which is the way we will refer to it throughout this post.

Unlike SDI, which bundles video, audio, and ancillary data into a single signal carried over a dedicated cable, SMPTE 2110 treats these elements as separate streams. Video, audio, and metadata are transmitted independently across an IP network and recombined at their destination. This separation allows for greater flexibility in routing and processing, especially in complex environments.

One of the most important aspects of SMPTE 2110 is timing. Rather than relying on genlock distributed through dedicated hardware, SMPTE 2110 systems use precise network-based timing mechanisms to ensure all devices remain synchronized at the frame level. This synchronization is critical in any real-time production environment, and it becomes especially important when multiple systems must agree on exactly when a frame was captured, rendered, or displayed. The result is a media transport system that trades physical cabling complexity for network complexity, a shift that brings both significant advantages and new challenges.

How SMPTE 2110 Fits into an ICVFX Stage

ICVFX stages are fundamentally about synchronization. The camera, LED wall, tracking system, and render engine all need to agree on timing and perspective for the illusion to hold. In a typical Unreal Engine–based workflow, rendered frames must align precisely with camera motion and exposure. Any timing mismatch can manifest as tearing or perspective errors that are immediately visible on camera and can ruin an entire shot.

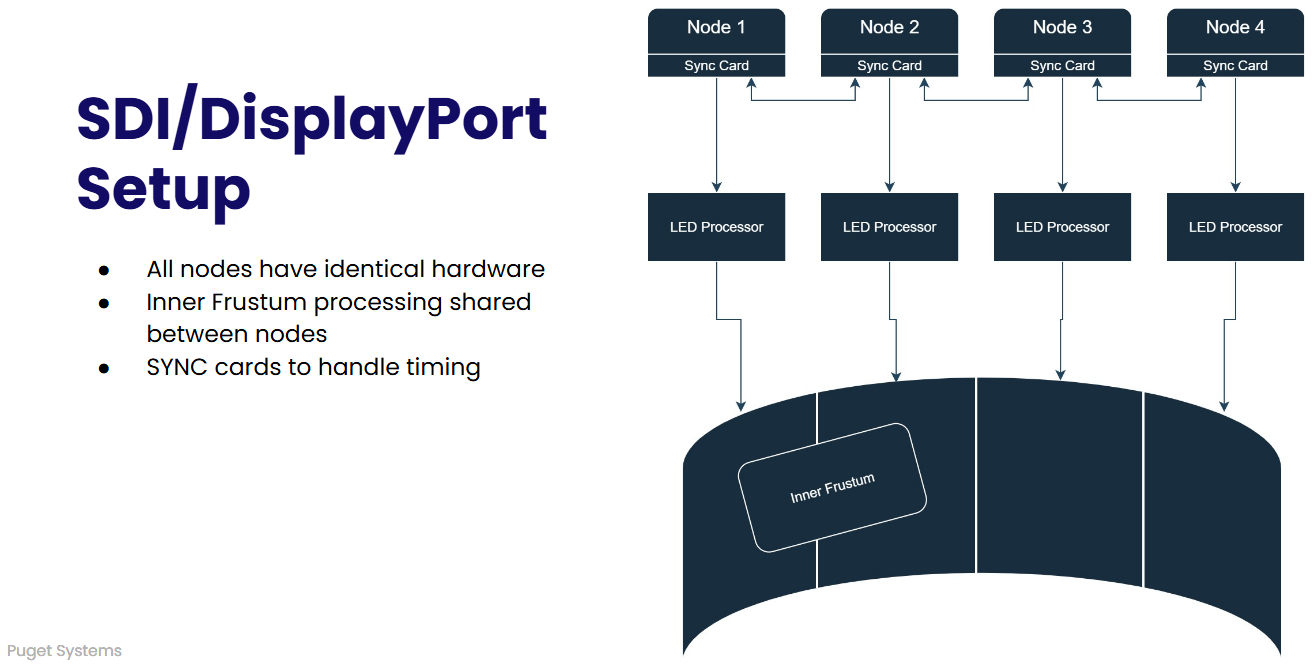

Even the most basic of ICVFX stages has multiple render nodes, each dedicated to a specific section of the LED wall. Each node outputs the rendered frame — either via DisplayPort or HDMI out of the GPU, or SDI from a capture card — to the LED wall processor. Each node renders the background, or outer frustum, at all times, and then will also render the camera’s view, or inner frustum, whenever the camera is pointed at it. This relies on NVIDIA RTX PRO™ video cards, sync cards, and capture cards, as well as a mix of DisplayPort, SDI, and network cabling to make sure every system renders at the same time and communicates with the LED wall, cameras, and motion tracking system.

SMPTE 2110 offers a different approach. Instead of outputting video with DisplayPort or SDI, the rendered frames are sent out from a specialized network card, such as the NVIDIA® BlueField®-3. By transporting uncompressed video over IP with precise timing, it allows camera feeds, rendered outputs, and monitoring signals to coexist on a shared network fabric. Instead of hard-wired signal paths, video streams can be dynamically routed where they are needed, while still maintaining deterministic timing.

For Unreal Engine specifically, the value lies in flexibility and consistency. In current ICVFX setups, each node powering the wall must be configured identically. That means that if a stage wants to use dual GPUs to give extra horsepower to the inner frustum, then all nodes need to have two GPUs. Moreover, when the camera pans across the wall, the work of rendering that frame must pass from node to node. This handoff has the potential to cause hiccups as a previously idle GPU needs to spin up.

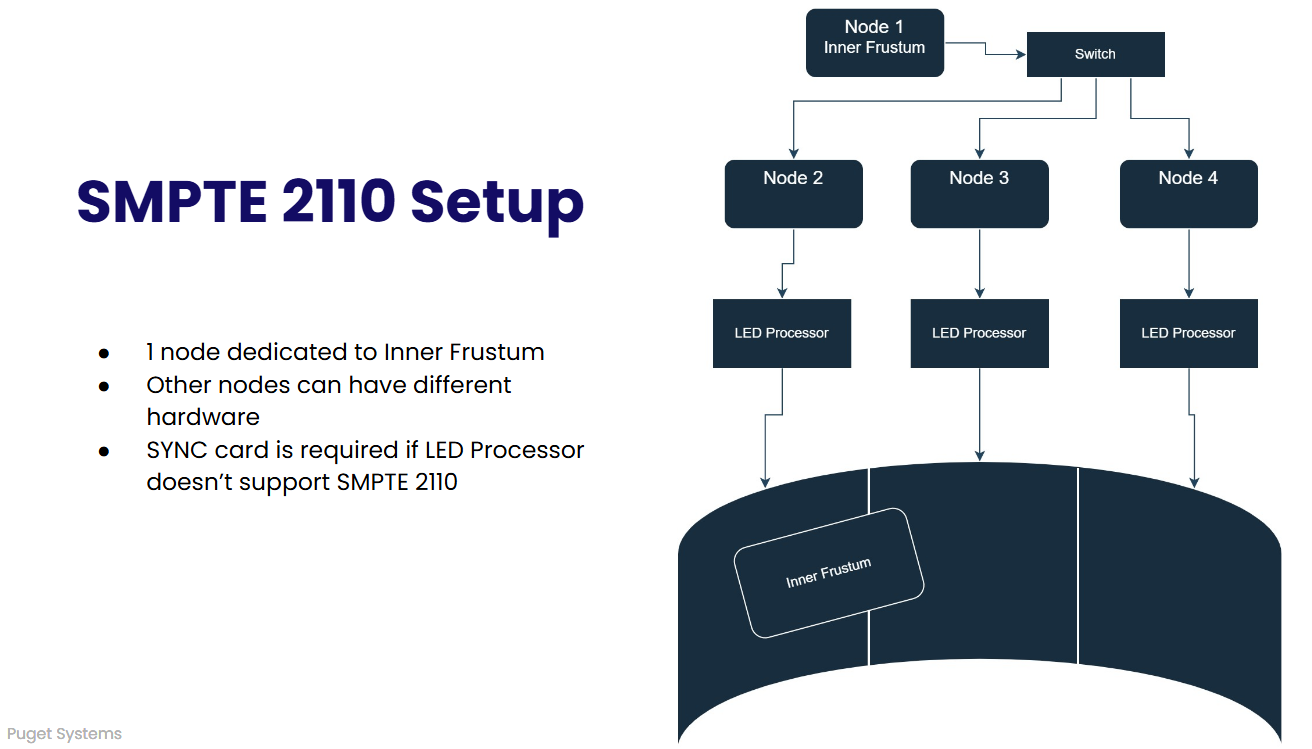

With SMPTE 2110, a single node (or two if using Unreal’s Tiled Rendering) can be dedicated to the inner frustum, with the remaining nodes only rendering the outer frustum. Because the inner frustum requires the highest render details and frame rates, that node could use higher-end hardware than the nodes that are running the outer frustum.

Because a single node is dedicated to rendering the inner frustum, users with this setup should experience greater frame stability. With a traditional SDI layout, the work of rendering that section would move from one system to another as the camera pans across the wall. Each of those handoffs is a potential point of failure and can cause a performance hiccup. With a SMPTE 2110 workflow, one node is always rendering that frustum and the other nodes only need to display the output. Less performance overhead, less risk of issues.

While SMPTE 2110 is often discussed in the context of full studio facilities, its impact is most immediately felt on the stage itself. Integration with the wider studio, such as control rooms, ingest, or post-production, can follow naturally, but the stage remains the critical starting point.

The Downsides and Real-World Challenges

Despite its advantages, SMPTE 2110 is not a universal solution and it is not without cost. The most obvious challenge is complexity. Deploying SMPTE 2110 requires a deeper understanding of networking than traditional SDI workflows. Configuration, validation, and troubleshooting all demand skills that sit at the intersection of broadcast engineering and IT infrastructure.

There is also a higher barrier to entry in terms of hardware. Network switches, interface cards, LED processors, and supporting infrastructure must all be capable of handling sustained, high-bandwidth, uncompressed video streams. While these requirements are manageable, they represent a real investment.

Debugging can be more difficult as well. When issues arise in an IP-based system, they may not be immediately visible in the way a faulty cable or misrouted SDI signal would be. Effective monitoring and validation tools become essential.

Perhaps most importantly, SMPTE 2110 does not automatically simplify an ICVFX workflow. Poorly designed implementations can introduce more points of failure rather than fewer. Success depends heavily on careful system design and thorough testing.

When SMPTE 2110 Makes Sense for ICVFX

SMPTE 2110 is not a requirement for every ICVFX stage. Smaller or simpler stages may find that traditional workflows remain sufficient for their needs.

However, for teams building larger, more flexible, or more future-facing virtual production environments, SMPTE 2110 offers a compelling foundation. Its ability to scale, its emphasis on timing accuracy, and its alignment with modern GPU-driven workflows make it increasingly relevant as ICVFX continues to mature.

At Puget Systems, our goal is not to push a particular technology for its own sake, but to help studios make informed decisions based on real-world workflows. SMPTE 2110 is one more tool in that toolkit — and when used appropriately, it can enable capabilities that were previously difficult or impractical to achieve.