Table of Contents

TL;DR: Effects of Reducing PCI-e Bandwidth to GPU for Content Creation

In the worst case, restricting the available PCI-e bandwidth to the primary GPU can reduce performance in content-creation applications by up to 58% for specific workflows when comparing PCI-e 4.0 x16 and PCI-e 3.0 x4. However, most applications tested showed little to no performance degradation, including Unreal Engine, Stable Diffusion, and Blender.

In the more common situations of reducing PCI-e bandwidth to PCI-e 4.0 x8 from 4.0 x16, there was little change in content creation performance: There was only an average decrease in scores of 3% for Video Editing and motion graphics. In more extreme situations (such as running at 4.0 x4 / 3.0 x8), this changed to an average performance reduction of 10%. Finally, in the absolute worst case (PCI-e 3.0 x4), the video editing and motion graphics scores averaged 75% baseline performance, with both GPU effects and H.264 media showing substantial performance degradation.

In general, as long as you are able to run your GPU at PCI-e 4.0 x8 or above (or PCI-e 3.0 x16 if you have an older motherboard), you can expect nearly full performance for content creation workflows. Since it is incredibly uncommon to have a situation where a modern system will run at anything below this, we largely recommend not worrying about PCI-e bandwidth for this type of workflow.

Introduction

PCI Express (PCI-e) is a technology that connects many internal computer devices to the motherboard—including video cards, NVMe drives, and network cards. Over the years, we have seen several revisions of the technology, but the most commonly seen currently are PCI-e 3.0, 4.0, and 5.0. PCI-e 3.0 is mainly relegated to older devices now, as all current-gen motherboards and graphics cards support at least PCI-e 4.0, and the newest Z790 and X670 motherboards support PCI-e 5.0 in at least one full-size slot (although we have yet to see a consumer video card at PCI-e 5.0).

Each PCI Express revision introduces new features, but the primary difference is in the data transfer rate. A PCI-e connection consists of a number of lanes (typically between 4 and 16 for most expansion slots) which have a maximum transfer rate depending on the PCI-e version; every version since 3.0 has doubled the previous version’s transfer rate for a per-lane rate of 32 GT/s on PCI-e 5.0. The total bandwidth of an expansion slot depends on both the number of lanes and the PCI-e version, such that a PCI-e 5.0 connection with eight lanes has the same bandwidth (31.5 GB/s) as a PCI-e 4.0 connection with 16 lanes.

Currently, many desktop motherboards feature limited add-in card support, instead dedicating their available PCI-e lanes to features like M.2 connections, additional USB ports, and high-speed ethernet connections. This means they may only have three PCI-e slots, and typically, two of those will share bandwidth: two slots combined provide either a single PCI-e 5.0 x16 connection or two PCI-e 5.0 x8 connections. The third slot is typically a PCI-e 4.0 x4 (or even just x2!) connection.

This limitation on add-in card support means that users who want a powerful video card and additional network cards, raid cards, or capture cards will have to reduce the bandwidth to their video card to at least x8. And, in multi-gpu setups, one of the cards will likely run at speeds as low as x4. But the question is: how much performance is lost when video cards are bandwidth-restricted so dramatically?

Test Setup

Test Platform

| CPUs: Intel Core i9 13900K 16-core |

| CPU Cooler: Noctua NH-U12A |

| Motherboard: ASUS ProArt Z690-Creator WiFi |

| RAM: 2x DDR5-4800 32GB (64GB total) |

| GPUs: NVIDIA GeForce RTX 4080 16GB Studio Driver 536.67 AMD Radeon RX 7900 XTX 24GB Adrenaline 23.7.2 |

| PSU: Super Flower LEADEX Platinum 1600W |

| Storage: Samsung 980 Pro 2TB |

| OS: Windows 11 Pro 64-bit (22621) |

Benchmark Software

| DaVinci Resolve 18.5 PugetBench for DaVinci Resolve 0.93.2 |

| Premiere Pro 23.5.0 PugetBench for Premiere Pro 0.98.0 |

| After Effects 23.5 PugetBench for After Effects 0.96.0 |

| Unreal Engine 5.2 |

| Blender 3.6.0 |

| Automatic 1111 Version: 1.5.1, xformers: 0.0.17 Checkpoint: v1-5-pruned-emaonly |

| SHARK Version: 20230701_796 Checkpoint: stabilityai/stable-diffusion-2-1-base |

To evaluate the impact of PCI-e bandwidth, we used one of our fastest desktop platforms: the Intel i9 13900K. Although a workstation platform like Threadripper Pro may have reduced the potential for CPU bottlenecks in some workflows, those boards have enough PCI-e lanes to dedicate a full 16 to each PCI-e slot. We thus decided to use a platform where PCI-e lane sharing will reduce bandwidth to add-in cards to see the real-world impact on the types of systems we sell. The GPUs we are testing with are high-end consumer models from NVIDIA and AMD, the GeForce RTX 4080 and Radeon RX 7900 XTX, to see if there is any difference between manufacturers when bandwidth-constrained.

We are using a broad testing suite that includes most categories of benchmarks that we currently test with for GPU reviews. These are content-creation-focused and include our Puget Systems Benchmarks for After Effects, DaVinci Resolve, and Premiere Pro, in addition to our under-development benchmarks for Unreal Engine 5.2. We are also testing offline GPU rendering with Blender 3.6 and Stable Diffusion performance with SHARK and Automatic1111.

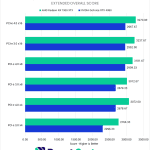

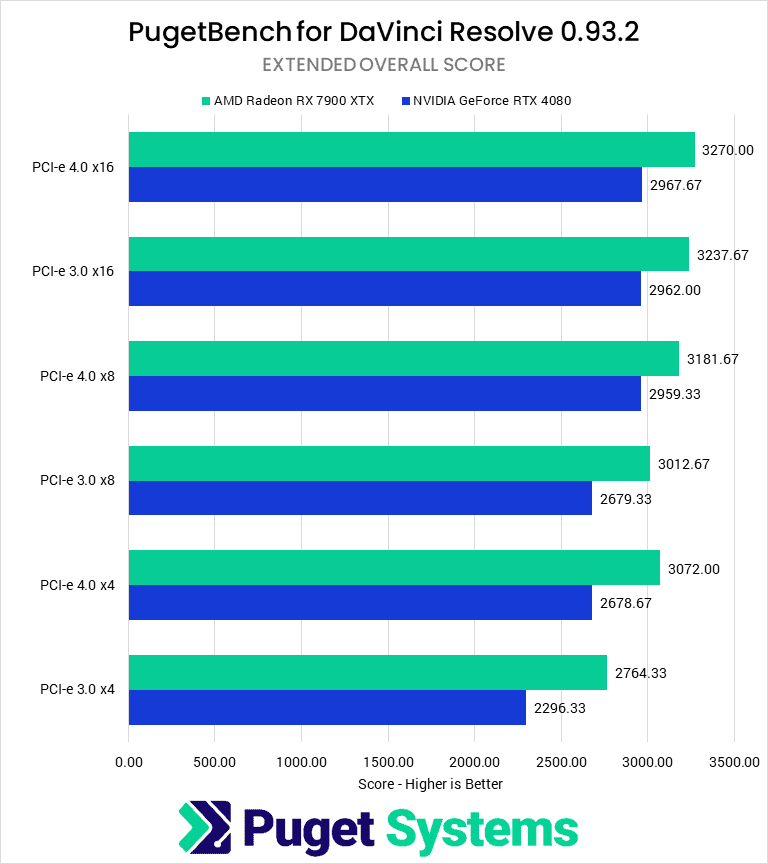

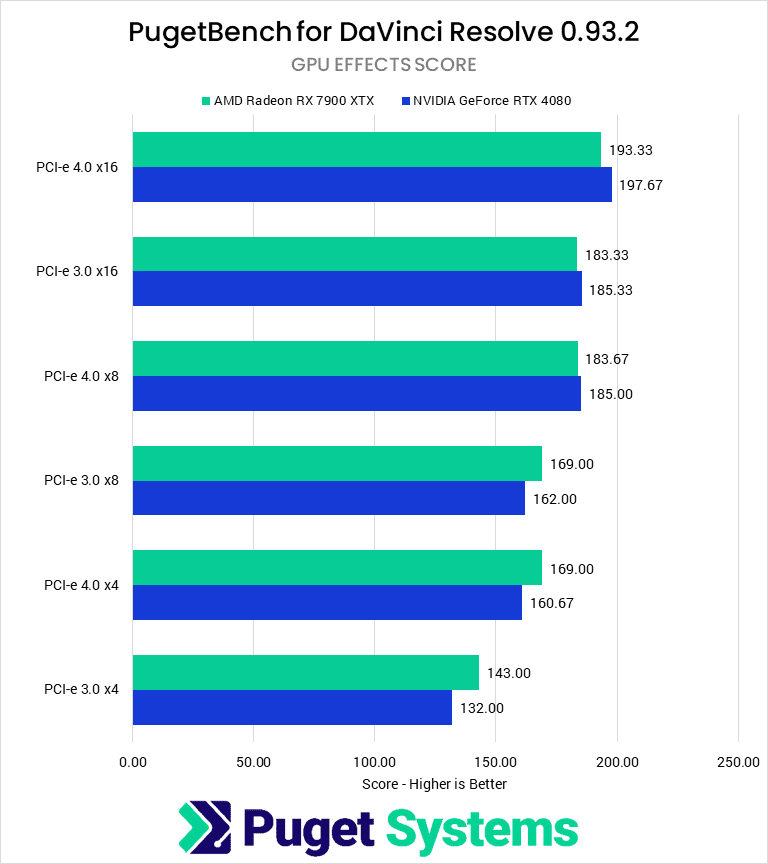

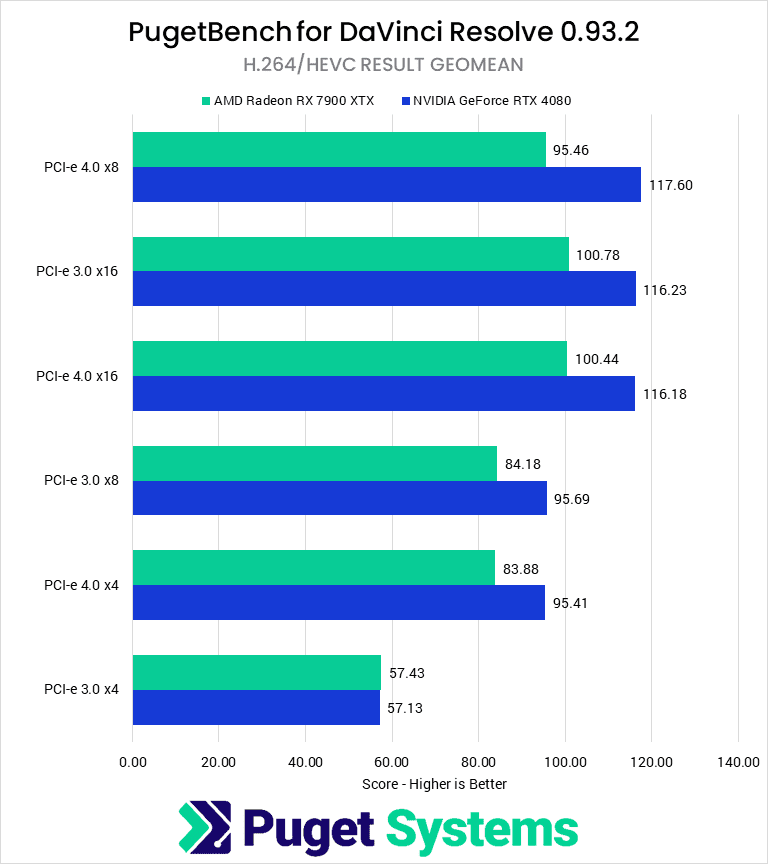

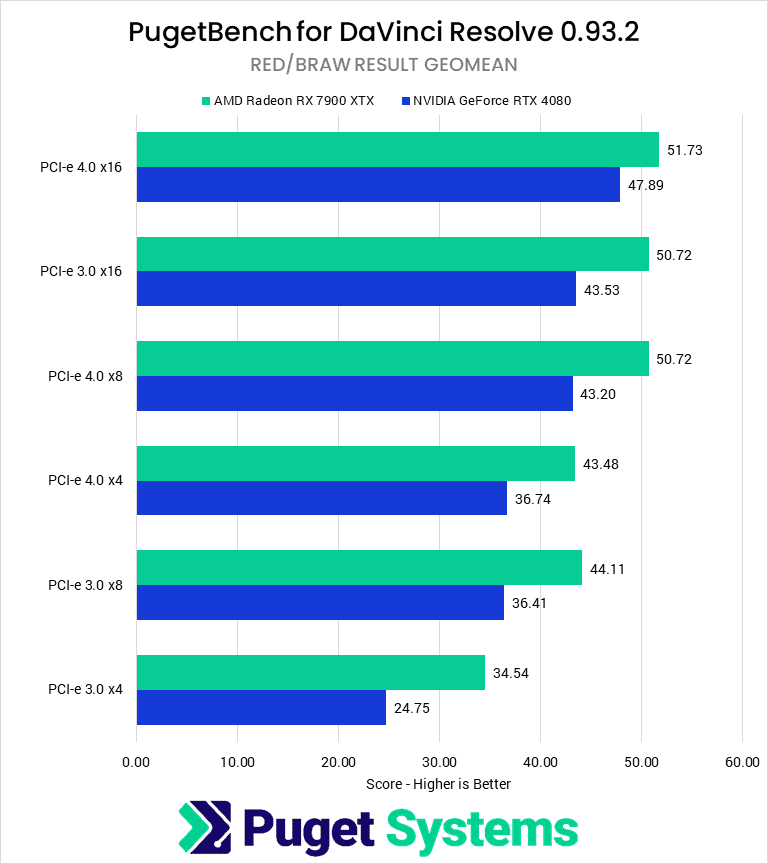

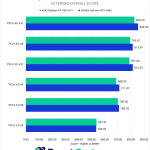

Video Editing: DaVinci Resolve Studio

Starting with DaVinci Resolve, we see that decreasing PCI-e bandwidth to the GPU has a small but noticeable effect on overall performance. Overall, there is a maximum performance loss of 22% for NVIDIA and 15% for AMD. This is a relatively significant difference, but there is also a clear grouping of scores at the higher end of PCI-e bandwidth.

At PCI-e 4.0 x16 and x8, and PCI-e 3.0 x16, there is little difference in performance except in our GPU Effects tests, where halving the bandwidth to 4.0 x8 / 3.0 x16 resulted in 5% lower scores. The NVIDIA card also showed a decrease of 9% in RED/RAW media results. What this means is that sharing bandwidth for an add-in card on a Gen 4 motherboard should only negatively affect your performance if you primarily use RAW media.

The story changes somewhat if that resource sharing occurs on an older PCI-e 3.0 connection, as reducing bandwidth to 3.0 x8 / 4.0 x4 sees a further overall drop of 10% and 6% for NVIDIA and AMD, respectively. All three score categories showed substantial performance hits, particularly for RAW media and GPU effects.

Finally, when the GPU bandwidth is constrained to PCI-e 3.0 x4, we see the aforementioned 22 and 15% score decreases. Although all categories perform poorly in this configuration, we see the most substantial hit for H.264/HEVC media, which drops precipitously with the last halving of bandwidth. This is interesting because in this case it isn’t raw GPU performance being utilized, but rather the NVDEC/NVENC hardware decoder/encoder.

Overall, the difference between x8 and x16 shouldn’t come into play for most DaVinci Resolve users. The most common workflow where it would is for those working with RAW media, who also need add-on cards like a BlackMagic Decklink for accurate video monitoring. However, for that type of workflow, we typically recommend upgrading to AMD Threadripper PRO anyways, which has plenty of PCI-e lanes to handle multiple cards.

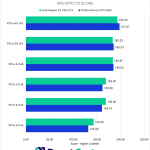

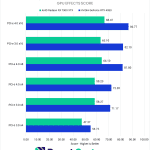

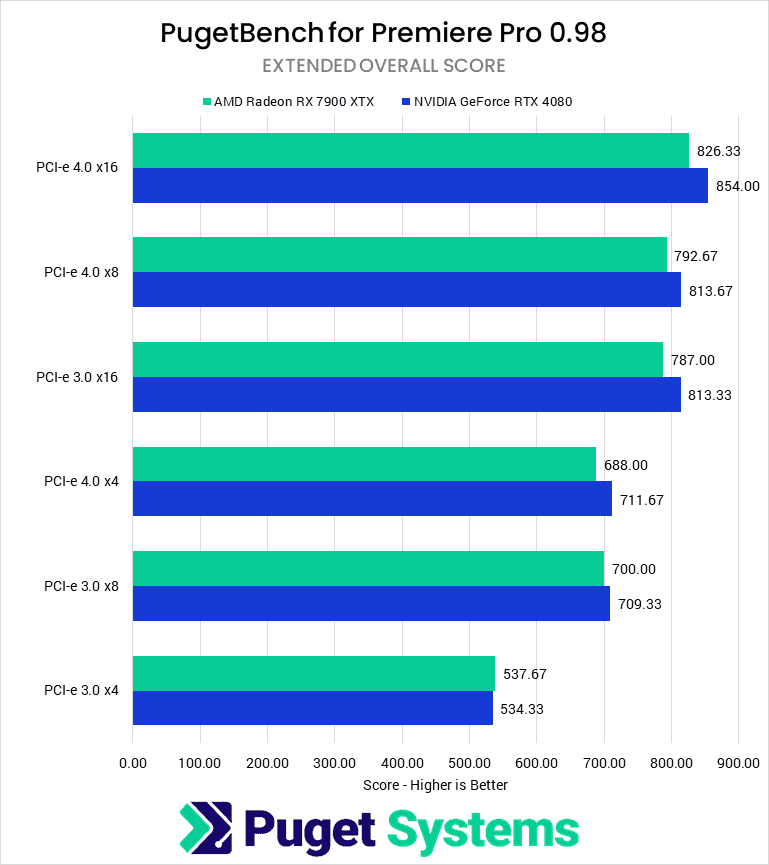

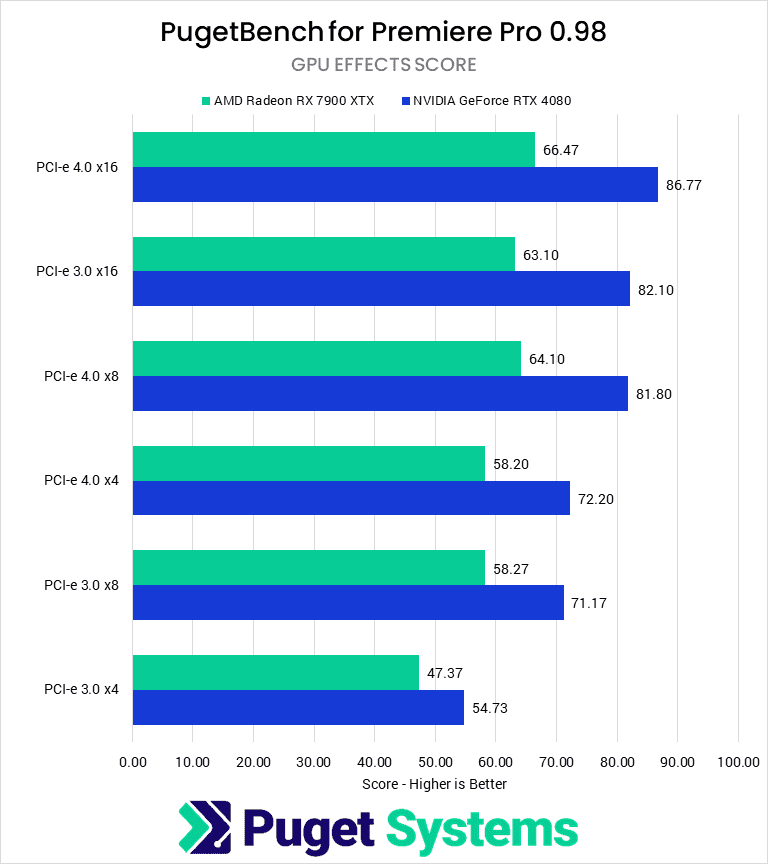

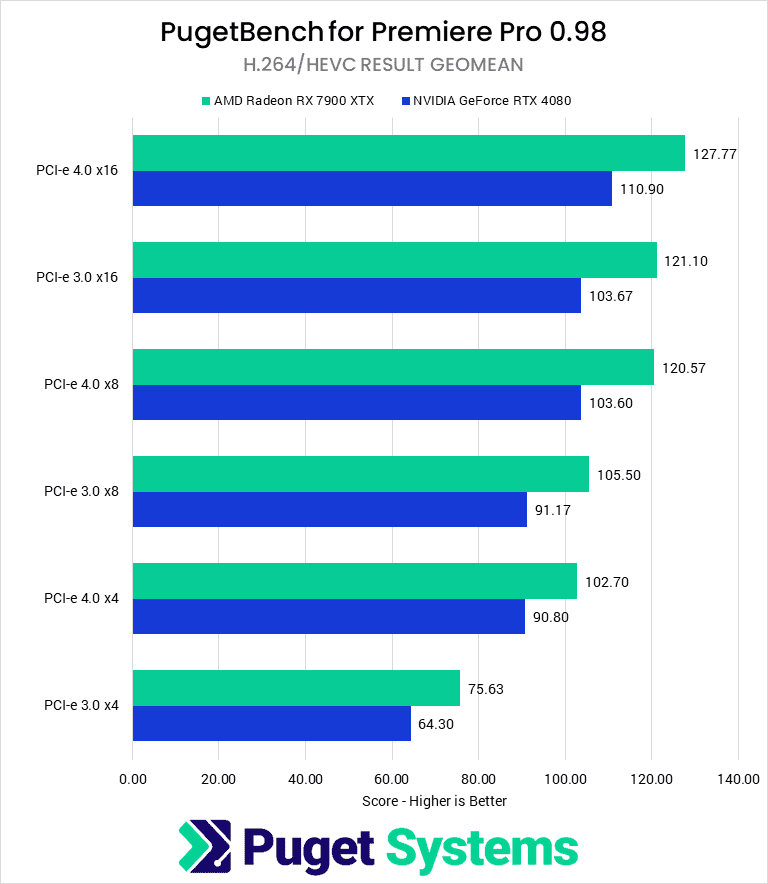

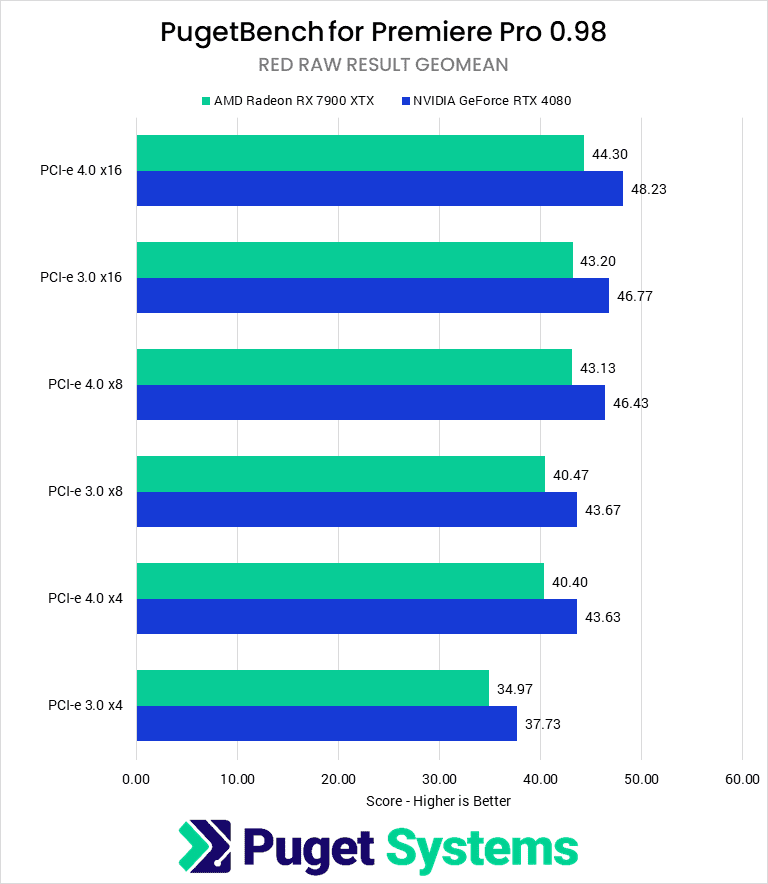

Video Editing: Adobe Premiere Pro

Continuing on the trend from DaVinci Resolve, Adobe Premiere Pro shows an even larger difference in performance with reductions to the PCI-e bandwidth to the GPU. In terms of overall performance, both AMD and NVIDIA showed a worst-case decrease of 35% between PCI-e 4.0 x16 and PCI-e 3.0 x4, although AMD was slightly less affected than NVIDIA.

When running a more common lane reduction of PCI-e 4.0 x8 (or PCI-e 3.0×16), both manufacturers had a modest performance drop of 4%. The largest effect was on H.264/HEVC tests (6%) and the smallest on the RED/RAW tests (3%). Overall, running the GPU at half-bandwidth will have little impact on real-world performance in Premiere Pro.

The next step down, at PCI-e 4.0 x4 (PCI-e 3.0 x8), shows a more considerable difference of 17% from full bandwidth. Running your GPU in this configuration is less than ideal, with all scores seeing at least a 9% decrease in performance and H.264 media particularly affected.

When working in Premiere Pro, especially with H.264/HEVC codecs, reducing the PCI-e bandwidth too far can greatly affect your performance. Although the somewhat common situation of dropping to PCI-e 4.0 x8 does not incur too large a penalty, anything below that should be avoided.

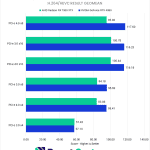

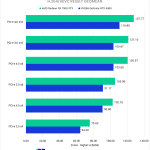

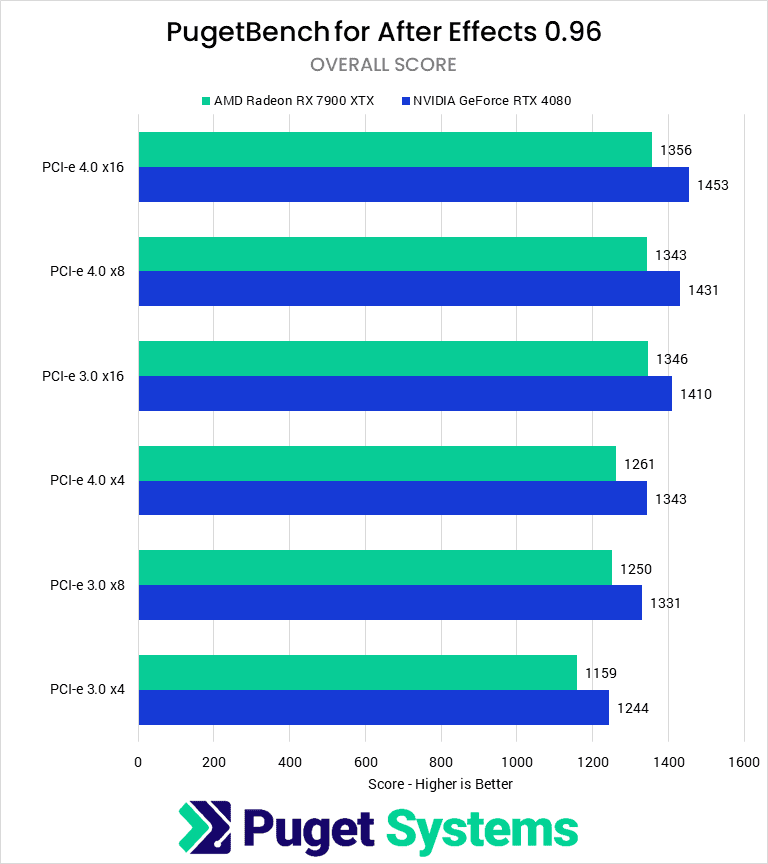

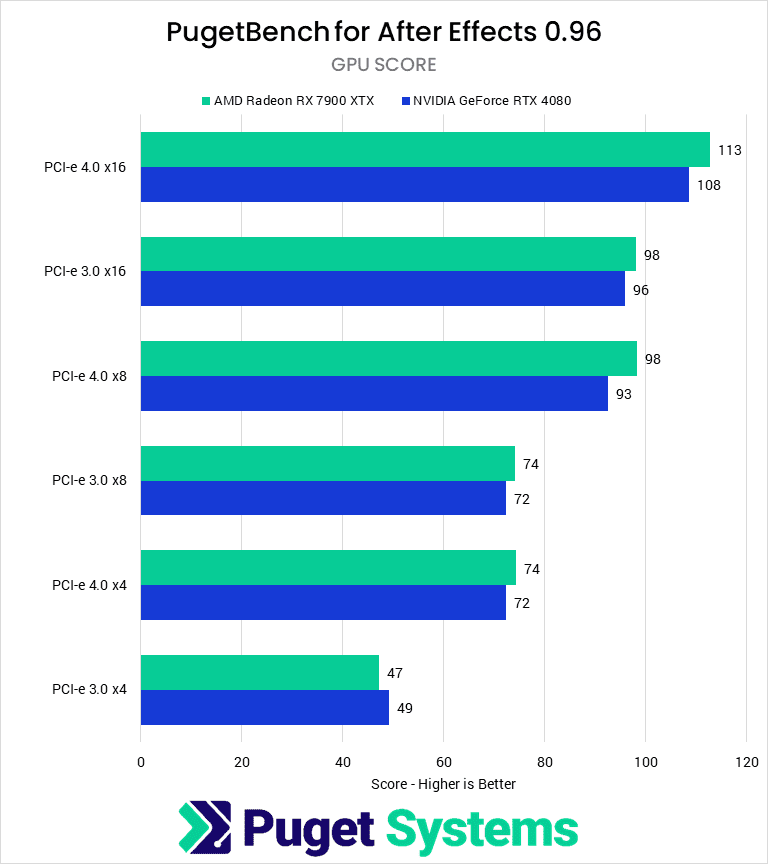

Motion Graphics/VFX: After Effects

After Effects is not typically a benchmark we run when investigating GPU performance as it typically doesn’t require a powerful GPU. Provided you have sufficient VRAM for your project, mid-range GPUs are more than enough for most workflows. Nonetheless, we decided to test with After Effects to see if constricting GPU PCI-e bandwidth would negatively impact performance.

Starting with our first decrease in bandwidth from PCI-e 4.0 x16 to 4.0 x8 / 3.0 x16, we see virtually no performance impact outside of the margin of error for the overall score. However, there is a reduction in performance of about 10% in the GPU Effects score for both cards.

Reducing the PCI-e connection even further to 4.0 x4/3.0 x8 does show an overall performance drop of 7%, which is noticeable but probably acceptable for many users, given that After Effects tends to be CPU-bound rather than GPU-bound. If GPU effects are a large part of your workflow, the GPU score sees a much more significant score decrease of 33%.

At the lowest tested bandwidth of 3.0 x4, we finally see an Overall Score greater than 10% below the 4.0 x16 score. At this point, even RAM Preview and Render scores (not shown) are seeing performance loss, and GPU effects are particularly struggling with only 45% of the performance compared to running at full bandwidth.

It is very rare to run a GPU at PCI-e 3.0 x4 speeds, however, so in the vast majority of situations, PCI-e speed and bandwidth should not be a concern for After Effects users.

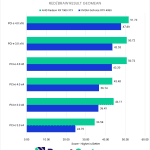

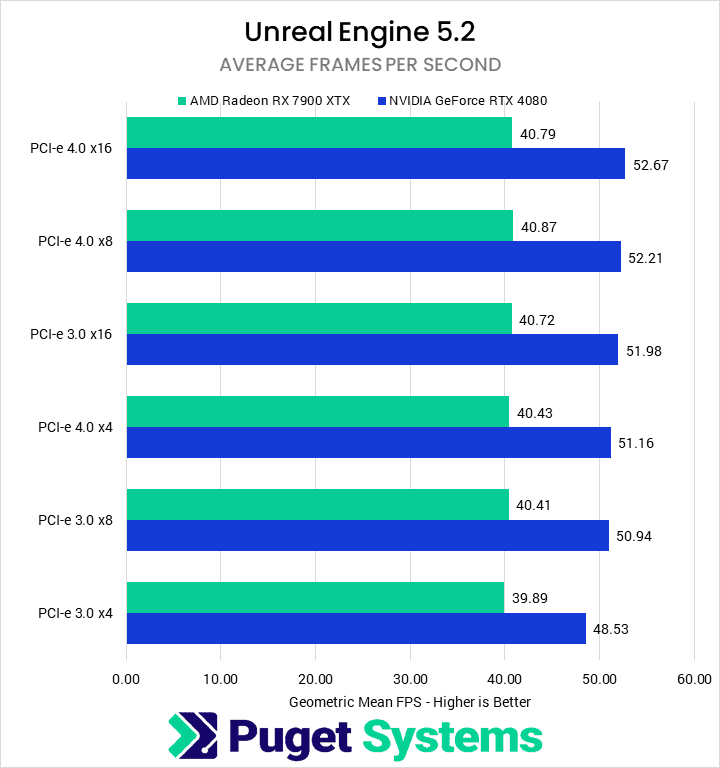

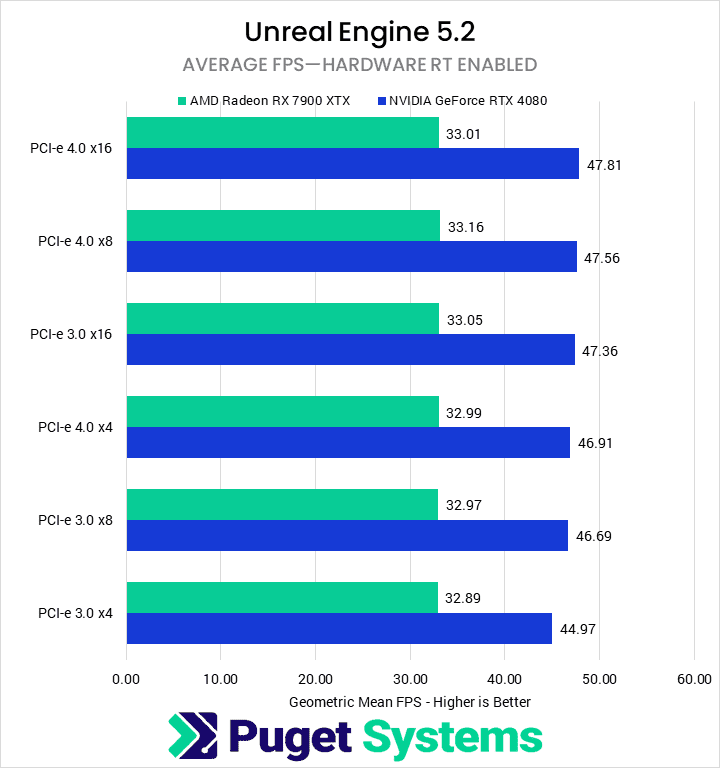

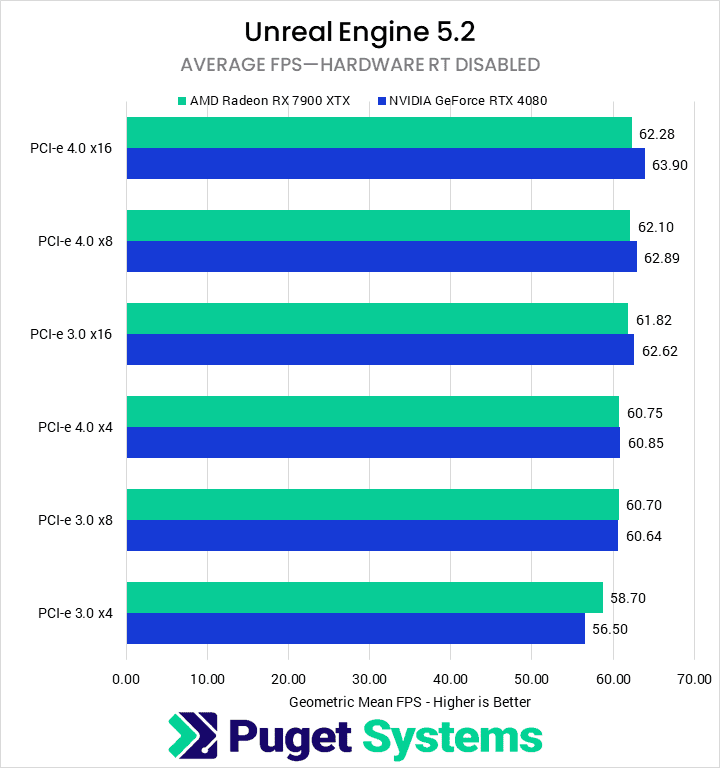

Game Dev/Virtual Production: Unreal Engine

The real-time renderer we included in our testing is Unreal Engine 5.2, which is used in a wide variety of industries including game development, virtual production, and architecture visualization. As you might expect, reducing PCI-e bandwidth negatively impacts the average fps for NVIDIA and AMD GPUs. However, the effect is much more pronounced for the NVIDIA GPU, with an 8% total difference between PCI-e 3.0 x4 and 4.0 x16 compared to the 2% difference for the AMD GPU. When looking at sub-scores, we also see that the effect is much more pronounced for rasterized rendering performance, with 11% and 5% less performance for the RTX 4080 and RX 7900 XTX, respectively.

Although a drop in average FPS of 11% is significant, most of this drop for AMD and NVIDIA only occurs when running at the lowest bandwidth we tested: PCI-e 3.0 x4; The performance is impacted much less at 3.0×8-equivalent bandwidth. For most users, even when running on an older motherboard with PCI-e lane sharing, this won’t be a large sacrifice of performance.

In other words, just like with After Effects, PCI-e bandwidth is not likely a significant concern for the majority of Unreal Engine developers and users.

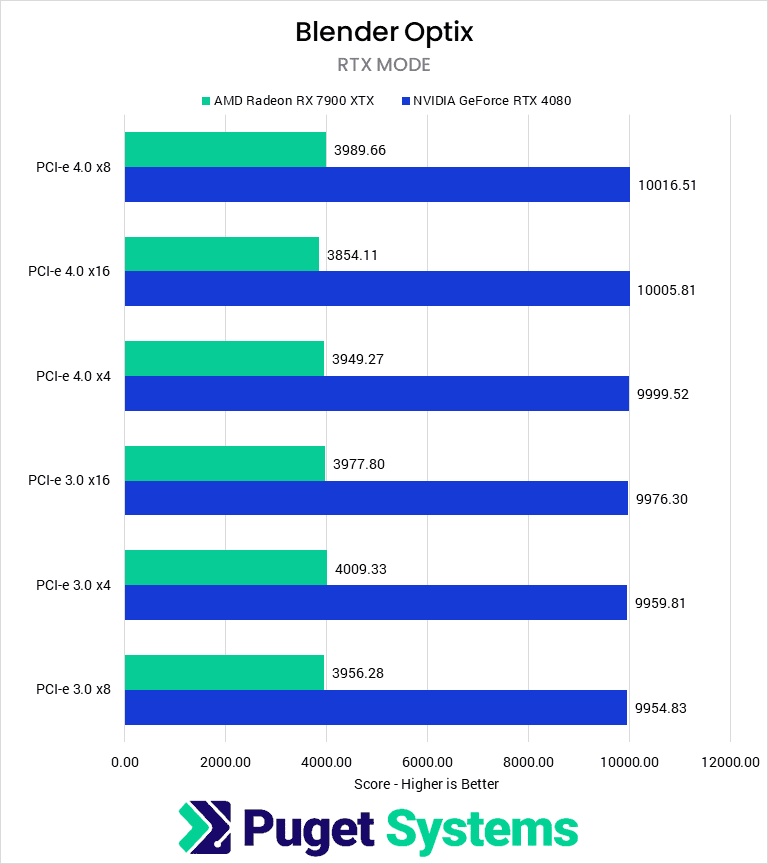

GPU Rendering: Blender

Moving on to our offline GPU Rendering test with Blender, we see no impact on performance from changes in PCI-e bandwidth. Most offline renderers start by loading the scene into VRAM, so this should be the only impact of the PCI-e connection—unless the system does not have enough VRAM to load the scene and must operate off system memory. However, this introduces further performance hits beyond what could be caused by limited PCI-e bandwidth, frequently resulting in a failure to load the scene or crashing during the render.

Given that GPU rendering like Blender is one of the most likely configurations to have multiple GPUs configured (and thus one of the most likely configurations to have some or all at reduced bandwidth), it is good to see that there should not be any adverse effect due to lowering bandwidth.

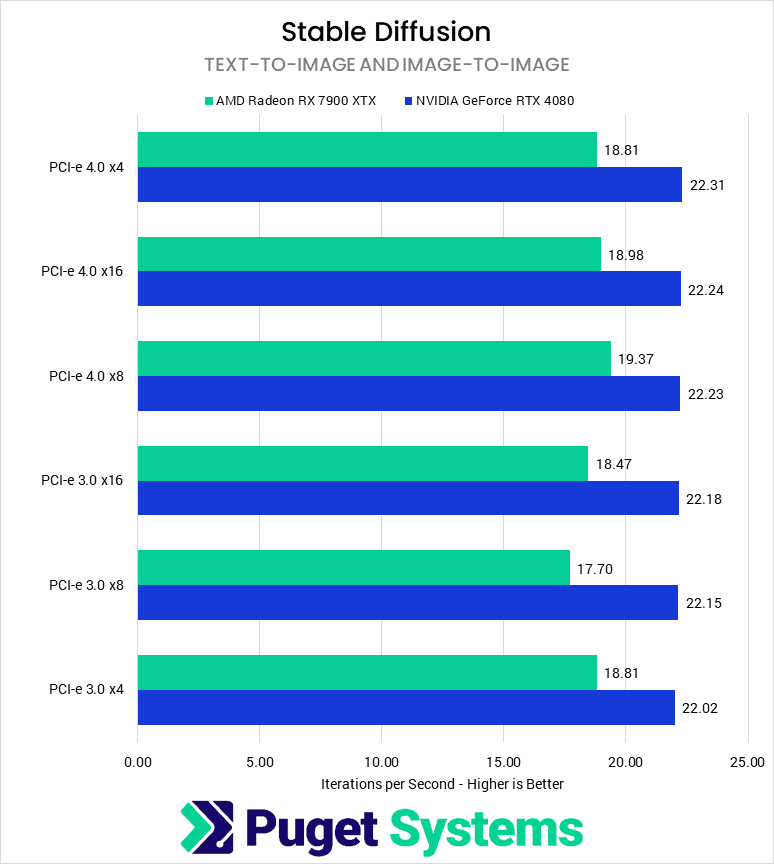

Stable Diffusion

For our Stable Diffusion testing, we used two of the three implementations discussed in our recent Stable Diffusion Methodology article, performed a geometric mean calculation on each of their results, and then took the highest result between the three implementations for each bandwidth-hardware combination to display on the chart. For AMD, each result shown is from the SHARK implementation, while for NVIDIA, they are all using the Automatic 1111 implementation.

The results for Stable Diffusion are all within the margin of error for this test. However, much like Blender, the speed of the PCI-e connection can change how long it takes to load the model into VRAM, which doesn’t show up in a performance benchmark like this. Because of this, we would recommend avoiding decreased bandwidth if possible, but it likely won’t impact things too much.

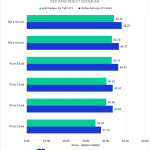

Overall Performance Impact of PCI-e Speed

To give some context for overall performance impacts, we normalized the scores to the maximum supported PCI-e bandwidth of our tested GPUs: PCI-e 4.0 x16. We then computed a weighted geometric mean of the normalized scores by and across categories—these can essentially be considered performance percentage ratios:

| NVIDIA GeForce RTX 4080 Normalized Scores | PCI-e 4.0 x16 | PCI-e 4.0 x8 | PCI-e 4.0 x4 | PCI-e 3.0 x16 | PCI-e 3.0 x8 | PCI-e 3.0 x4 |

|---|---|---|---|---|---|---|

| Video Editing / Motion Graphics | 100 | 97.81 | 88.59 | 97.34 | 88.23 | 74.56 |

| Rendering | 100 | 99.62 | 98.53 | 99.20 | 98.09 | 95.77 |

| Stable Diffusion | 100 | 99.31 | 96.73 | 99.27 | 98.79 | 97.29 |

| Overall Score | 100 | 98.91 | 94.51 | 98.60 | 94.92 | 88.56 |

| AMD Radeon RX 7900 XTX Normalized Scores | PCI-e 4.0 x16 | PCI-e 4.0 x8 | PCI-e 4.0 x4 | PCI-e 3.0 x16 | PCI-e 3.0 x8 | PCI-e 3.0 x4 |

|---|---|---|---|---|---|---|

| Video Editing / Motion Graphics | 100 | 97.41 | 89.93 | 97.82 | 89.61 | 77.76 |

| Rendering | 100 | 101.84 | 100.78 | 101.50 | 100.84 | 100.86 |

| Stable Diffusion | 100 | 102.05 | 99.10 | 97.31 | 93.26 | 99.10 |

| Overall Score | 100 | 100.41 | 96.49 | 98.86 | 94.45 | 91.94 |

Overall, we can see the general trends from our application-specific results. In video editing/motion graphics (Premiere Pro, DaVinci Resolve, and After Effects), performance is fairly consistent degradation as we reduce the available PCI-e bandwidth, with 3.0 x4 scoring about 75% of the normalized baseline. Rendering—real-time with Unreal Engine and offline with Blender—and Stable Diffusion were relatively unaffected.

The big thing we want to point out is that with a modern motherboard and GPU using PCI-e 4.0, there was only about a 1% performance loss on average going from x16 to x8, as would be the case if using a consumer platform with multiple PCI-e devices. Older motherboards that are limited to PCI-e 3.0 would see a bigger impact, but even then it is only about 4-6%.

It is only when taken to the extremes and reducing the lane count to x4 that performance starts to take a serious hit, especially in workflows like video editing and VFX.

How much content-creation performance are you sacrificing by limiting bandwidth to Video Cards?

It can often be challenging on consumer motherboards to have multiple add-in cards while ensuring they all have access to the maximum number of PCI-e lanes they can use. Because of this, video cards frequently find themselves running at a lower PCI-e generation or lane count. Luckily, the most common reduction—from PCI-e 4.0 x16 to 4.0 x8—has relatively little impact on performance in content creation applications.

Video editing with Premiere Pro and DaVinci Resolve are the most sensitive applications we tested to PCI-e bandwidth. Running at PCI-e 4.0 x16, 4.0 x8, or 3.0 x16 should have a negligible impact on your workflow. This commonly occurs when installing an add-in card like a capture card or network card alongside the GPU in a current-gen motherboard or when using an older (10th-gen Intel or Ryzen 2000) CPU/motherboard combination. Installing an add-in card on these older motherboards and dropping GPU bandwidth to PCI-e 3.0 x8 is not recommended, as it can reduce performance from 10 to 30%.

After Effects was similarly impacted by restricting PCI-e bandwidth to the GPU, but to a lesser degree. Typically, After Effects is CPU-bottlenecked, but workflows with heavy GPU effects will see impacts of 10% at even 4.0 x8/3.0 x16. Otherwise, reducing the available PCI-e lanes will have a minimal impact until PCI-e 3.0 x4, which typically only happens in multi-gpu setups on consumer motherboards.

In contrast, rendering performance is not significantly impacted by PCI-e bandwidth. Blender was wholly unaffected, while Unreal Engine saw a slight difference of less than 10% at 3.0 x4, while all other configurations were less than 5%. Using multiple GPUs or add-in cards in a system for these workflows should not cause performance issues.

Finally, Stable Diffusion was unaffected by the number or generation of PCI-e lanes available to the GPU. All of our results were within the margin of error. The only area where we expect there may be any difference would be for model load time.

When we configure the systems we sell, we balance the need for maximum performance from components with the desire for add-in cards necessary for our customers to do their work. Frequently, this means reducing the primary GPU to PCI-e 4.0 x8, which reduces the PCI-e bandwidth in half. However, as we showed in this article, this major reduction in bandwidth often has a minimal impact on real-world performance. Outside of a few uncommon situations, this testing confirms that as long as you have a modern motherboard which supports PCI-e 4.0, running the GPU at x8 speeds is not an issue.

Looking for a workstation for any of the applications we tested? You can visit our solutions page to view our recommended workstations for various software packages. If you have a unique workflow, our custom configuration page lets you assemble the hardware you need. And if at any point you want to ensure you are getting the perfect configuration for your needs—or are unsure where to start—our technology consultants are available to help.